In the true spirit of Hashi@Home, it’s time to loop back on ourselves and take a closer look at the beginning. A few posts back, I wrote about solid base images for automation, where I described a more perfect approach to building base images that were ready to roll as soon as they were deployed into the cluster.

I keep having to remind myself that the real difference between Hashi@Home and a real Cloud-Native environment is that images in the latter are typically immutable and machines are disposable. If something is changed upstream in the deployment pipeline, then a new machine is deployed and the existing one is disposed of. I do not have this luxury with my setup at home because – again! – real hardware, physical bits.

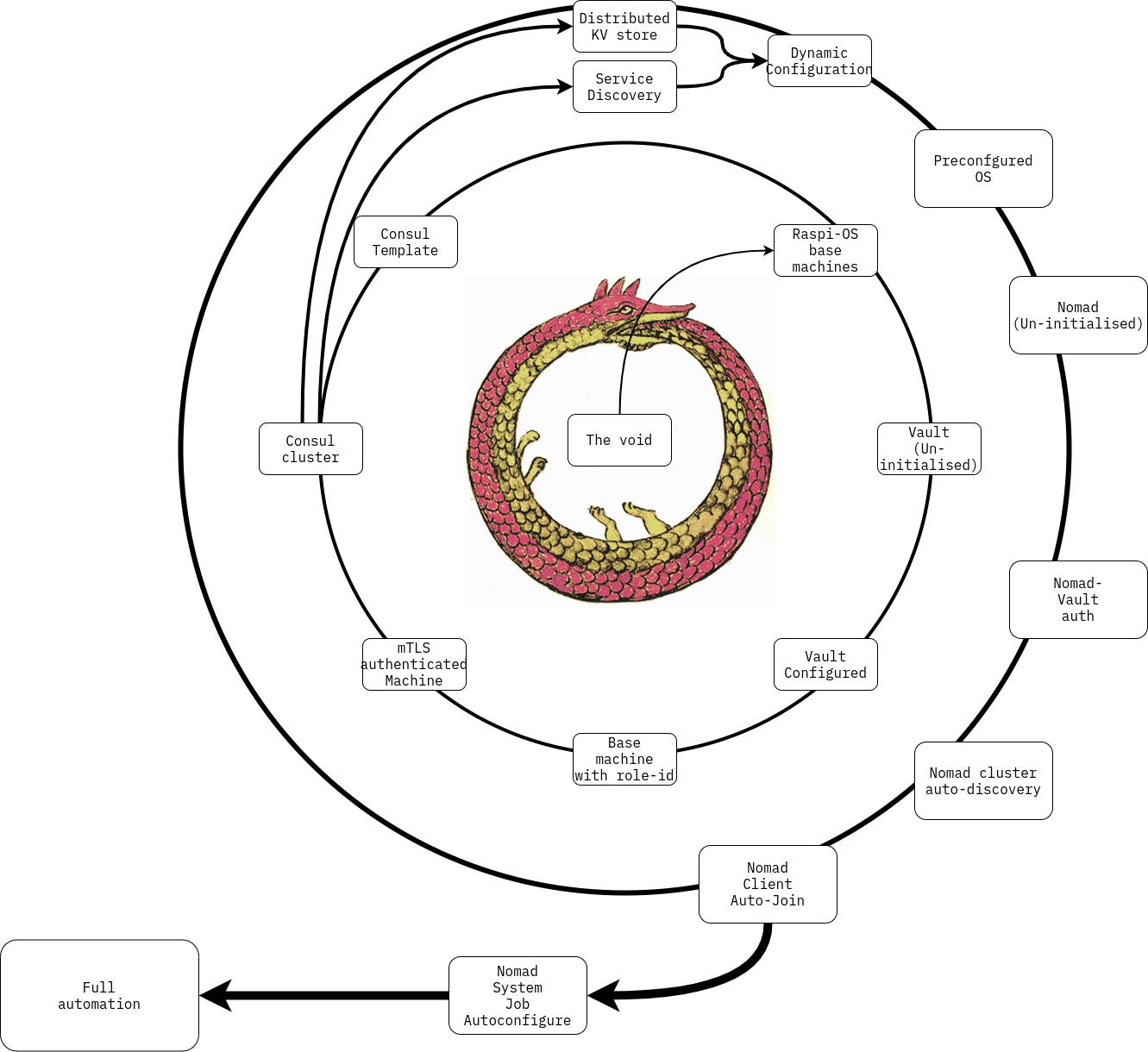

I also need to remind myself that there is a level-crossing, self-reinforcing feedback loop1 in creating these things. I am perpetually fascinated by these loops. Sometimes the appear as confounding circular reasoning, sometimes I catch a hint of something truly deep and sometimes when I look at them closely, they seem banal.

Every time I try to represent the way that a system changes once it starts interacting with itself, I catch myself wondering if I am really drawing the right picture, or if I am perhaps missing some obvious piece of information that only an ignoramous wouldn’t immediately see.

For every computatom which enters Hashi@Home, there is an initial state where it emerges from the void. From dead metal and plastic, we manage to make it compute things by putting an operating system on it and zapping it with some electricity

From nothing, we deploy bare machines with only an OS, and the only thing they know about is themselves and the network that they are connected to. The principles of zero-trust mean that they can’t just join existing infrastructure without credentials, but those credentials are generated by the infrastructure, which is itself a higher-order manifestation of the computatoms themselves.

Confused yet? This sounds like a paradox, but we all know that it is not only possible to deploy these services, but it’s also well-documented and already working! Clearly something is happening to break this paradox. Let’s take a closer look.

Picturing a Paradox

How do we really get to a system which is aware of itself2 able to configure its own components?

There are three level-crossing loops here, with the mythical “Ouroubos” representing the eternal paradox of self-referential loops.

The first is trivial – from the void emerges a computeatom. It doesn’t matter whether they emerge one at a time or all at once, the fact is that they know nothing about other. If we imbue the void with some form of esoteric knowledge, we are basically doing a Deus ex machina, inventing a god. This is indeed often how we have to proceed, and we are the unwitting gods of our universe, but invoking magical omnipotence has always gone against my personal tendencies.

The Terraformer

I have, dear reader, cheated in the pretty picture above, in that I have not yet found a way to emerge from the void without minor supernatural intervention. It is not all self-organisation, there is indeed some knowledge that needs to be injected into the system to put it into a position to cross a level. Let us not forget that this whole “Strange Loop” is an imperfect analogy and that these are very simple machines, so I’m not too embarrassed by this admission. My aim here is to tell a story after all, not write a simple manual!

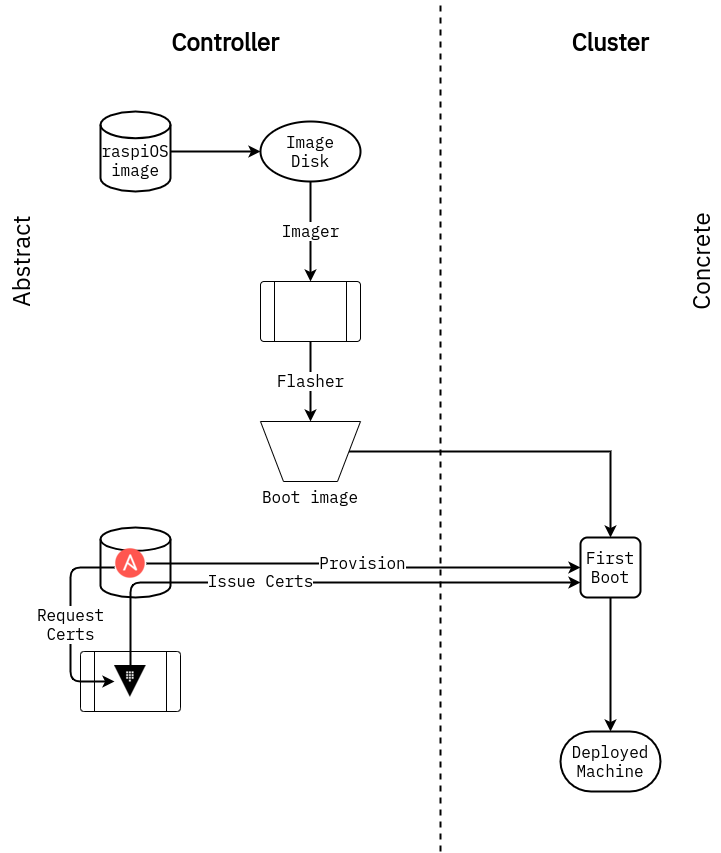

Be that as it may, we do need something in the form of a “Controller”, knowledge or data that lives outside of the current level of complexity of the system itself. This takes the form, at the various steps of evolution, of a set of configuration files, the application of a desired state which has been written prior to emergence from the void.

I find Hashicorp’s choice of name for “Terraform” to be particularly apt here. Consider the real evolution of the Earth or any other physical system in the universe. It is the result of nothing more than the effect of physical laws acting at any given time on physical systems. There is no esoteric knowledge, no supernatural controller, and yet it can result in a system such as the earth which is capable of generating life, and even life such as ours which is in turn endowed with its own generative capabilities. The earth has been terraformed, but it has terraformed itself, exactly as every other planet we have ever observed.

We, however, turn our gaze towards nearby systems and imagine imposing our desired state on them; we fancy our ability to terraform – the transitive form of the verb – an environment. Certainly in systems of our own making, this is our default approach and we have done it to everything that we have ever come into contact with, so long as it is of a scale amenable to the limits of our capability at any given time.

When we really do have machines that will be able to cross complexity levels we will probably call it “artificial life” or “artificial intelligence”3, but for now, we will need to do a Prometheus on our system.

The Controller

We need for something to apply configuration, to subtly change the internal state of the system iteratively, until it finds itself back to the original starting point, but with a new capability. For the moment, this is me, as I create these machines, cry “It’s Alive!” as they join the network, and then gently introduce them to their peers via layers of configuration. It is entirely possible that this procedure is encoded in some form of automation whereby a machine controller4 acts on the system its components emerge from the void. The way it seems to me now is that it is necessary to break the symmetry and and impose a direction on this evolution, that direction being of course from my mind, and taking the form of components and predefined workflows which combine them. Function (in the form of Ansible roles and Terraform modules) is imposed on the system via a contragrade external agent.

To bring it back to the mundane, we can represent the transitions between states in our emergence diagram above by drawing the separation between the controller and the cluster, and put in specific functions which allow that transition. When we have already generated the “Vault” function, but completing one circuit of the Strange Loop, we are able to invoke it for computatoms entering the universe

Thinking clearly

Many of the ideas here come to you mangled by my re-interpretation of them from their originators. The idea of genesis from the void is perhaps as old as human consciousness, so an innumerable amount of references could be made to previous thinkers. I make absolutely attempt to place myself amongst the likes of Plato! However, ideas are free to use, and I claim it as my right as a member of the human race. More modern and scientific formulations of emergence have been made reference to here in the works of Douglas Hofstadter and Terrence Deacon, via their works:

- “Incomplete Nature: How Mind Emerged from Matter”, Terrence W. Deacon (2012) ISBN 0393049914

- “I am a Strange Loop”, Douglas Hofstadter (2007) ISBN 0465030785

Forgive this brief excursion into the realm of the meta, dear reader. Hashi@Home is not just a tool for learning how to use my favourite products to learn how to Cloud Native things on a budget, it is also an opportunity to reflect on mysteries and paradoxes… in a word, to think. Understanding requires reflection and critical interrogation of one’s true comprehension of the little pictures we draw, and of the the more primitive symbols which represent them in our minds.

I hope that this is not a sterile exercise in analysis, but rather something which can help me to reason more clearly about the way in which we design and deliver services, thinking from the point of view of the human operator.

Footnotes and References

-

I am referring to my flawed interpretation of what Hofstadter calls a Strange Loop. The book “I am a Strange Loop”, as well as the concept of “autogenesis” or “abiogenesis” which I read about for the first time in Terrence Deacon’s book Incomplete Nature ↩

-

I don’t mean “self-aware” in the way that a conscious being is aware of itself, but rather the system has a means of asking questions about itself, it has a means to perform actions on itself, etc. It is a slippery concept, so bare with me. ↩

-

If anyone is aware of something which corresponds to such a definition, please tell me about it! ↩

-

I.e. a machine that acts as a controller, but also a controller that is actually a machine. ↩